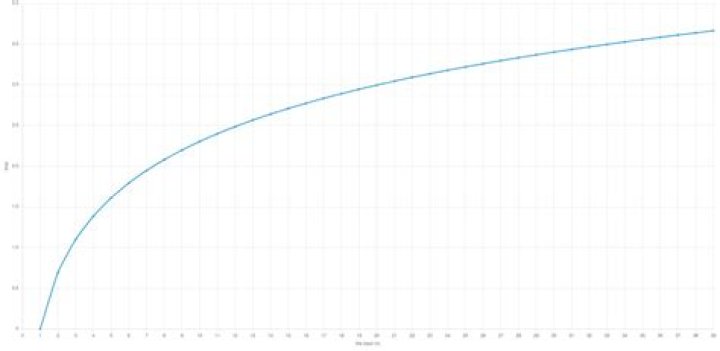

In this case, O(1) outperformed O(log n). As we noticed in the above cases, O(1) algorithms will not always run faster than O(log n). Sometimes, O(log n) will outperform O(1) but as the input size ‘n’ increases, O(log n) will take more time than the execution of O(1).

Which is better O or Logn?

O(n) means that the algorithm’s maximum running time is proportional to the input size. basically, O(something) is an upper bound on the algorithm’s number of instructions (atomic ones). therefore, O(logn) is tighter than O(n) and is also better in terms of algorithms analysis.

Is O N faster than O n logn?

O(n) algorithms are faster than O(nlogn).

Is there a better time complexity than O 1?

Rank 1: Constant Time Complexity This means the run time of the algorithm is either independent of the data size or there is a constant such that the runtime is bounded above by a constant. Nothing is faster than O(1) because O(1/n) is basically the same as O(1) based on the definition bounded above by a constant.Is an O N 2 algorithm better than O N algorithm?

O(n) is asymptotically faster than O(n^2). You are right that n is the size of data. So, an algorithm which takes O(n) time to solve a problem is faster than another algorithm which takes O(n^2) time to solve the same problem.

What is the fastest time complexity?

Runtime Analysis of Algorithms The fastest possible running time for any algorithm is O(1), commonly referred to as Constant Running Time. In this case, the algorithm always takes the same amount of time to execute, regardless of the input size. This is the ideal runtime for an algorithm, but it’s rarely achievable.

Is Logn smaller than 1?

log n is greater than 1 for every value of n > 10 (for log base 10). Basically the log in the nlogn is base 2. So for any n >= 2 you have logn >= 1 .

Which is better Nlogn or Logn?

Yes constant time i.e. O(1) is better than linear time O(n) because the former is not depending on the input-size of the problem. The order is O(1) > O (logn) > O (n) > O (nlogn).Is O N 2 still O n?

Big O NotationNameExample(s)O(n2)Quadratic# Duplicate elements in array **(naïve)**, # Sorting array with bubble sort

Can O 1 algorithm get faster?It’s running time does not depend on value of n, like size of array or # of loops iteration. Independent of all these factors, it will always run for constant time like for example say 10 steps or 1 steps. Since it’s performing constant amount of steps, there is no scope to improve it’s performance or make it faster.

Article first time published onWhat is the best algorithm?

- Binary Search Algorithm.

- Breadth First Search (BFS) Algorithm.

- Depth First Search (DFS) Algorithm.

- Inorder, Preorder, Postorder Tree Traversals.

- Insertion Sort, Selection Sort, Merge Sort, Quicksort, Counting Sort, Heap Sort.

- Kruskal’s Algorithm.

- Floyd Warshall Algorithm.

- Dijkstra’s Algorithm.

Which is best complexity?

AlgorithmData structureTime complexity:BestSmooth sortArrayO(n)Bubble sortArrayO(n)Insertion sortArrayO(n)Selection sortArrayO(n2)

What is n logn?

In O(n log n), n is the input size (or number of elements). log n is actually logarithm to the base 2. In divide and conquer approach, we divide the problem into sub problems(divide) and solve them separately and then combine the solutions(conquer).

Is Logn less than N?

Clearly log(n) is smaller than n hence algorithm of complexity O(log(n)) is better. Since it will be much faster.

What is the order of time complexity?

What is a Time Complexity/Order of Growth? Time Complexity/Order of Growth defines the amount of time taken by any program with respect to the size of the input. Time Complexity specifies how the program would behave as the order of size of input is increased.

Is O N 2 better than O Logn?

8 Answers. So, O(N*log(N)) is far better than O(N^2) . It is much closer to O(N) than to O(N^2) . But your O(N^2) algorithm is faster for N < 100 in real life.

Is three possible?

Yes it is. With a pencil and paper, draw a graph of N^3 versus 2^N. For large enough values of N. (Your calculator is probably screwing up because of overflow.

What is difference between O N and O N 2?

You can apply both notations to each of them. An algorithm which has linear runtime is in both O(n) and O(n^2) , since O only denotes an upper bound. Any algorithm that’s asymptotically faster than n^2 is also in O(n^2) . Mathematically O(n^2) is a set of functions which grows at most as fast as c * n^2 .

Is log N 2 O logN?

In your case, the function log2 n + log n would be O(log2 n). However, any function with runtime of the form log (nk) has runtime O(log n), assuming that k is a constant.

How long is binary search?

The time complexity of the binary search algorithm is O(log n). The best-case time complexity would be O(1) when the central index would directly match the desired value.

Which is slowest complexity?

Out of these algorithms, I know Alg1 is the fastest, since it is n squared. Next would be Alg4 since it is n cubed, and then Alg2 is probably the slowest since it is 2^n (which is supposed to have a very poor performance).

Which is the slowest time complexity?

Which Big O notation is fastest and which is slowest? Fastest = O(1) – The speed remains constant. It is unaffected by the size of the data set. Slowest = O(nn ) – Because of its time complexity, the most time-consuming function and the slowest to implement.

Is Big O the worst case?

Big-O, commonly written as O, is an Asymptotic Notation for the worst case, or ceiling of growth for a given function. It provides us with an asymptotic upper bound for the growth rate of the runtime of an algorithm.

Is O N 1 same as O N?

2 Answers. No. O((n+1)!) is O((n+1)n!), so is a factor O(n) larger than O(n!).

What is Logn time complexity?

Logarithmic running time ( O(log n) ) essentially means that the running time grows in proportion to the logarithm of the input size – as an example, if 10 items takes at most some amount of time x , and 100 items takes at most, say, 2x , and 10,000 items takes at most 4x , then it’s looking like an O(log n) time …

Why is 2 bad?

Ω(n2) is pretty bad An algorithm with quadratic time complexity scales poorly – if you increase the input size by a factor 10, the time increases by a factor 100.

Which Big O notation has the worst time complexity?

For example, the time complexity of Mergesort in the worst case is Θ(nlogn). This means in the worst case analysis, Mergesort will make roughly nlogn operations. Another example, In the average case analysis, we can use the big o notation to express the number of operations in the worst case.

Is constant time fast?

Constant could be fast or slow. O(n) means that the time the function takes will change in direct proportion to the size of the input to the function, denoted by n. Again, it could be fast or slow, but it will get slower as the size of n increases.

Which computational complexity is assumed the quickest?

Constant Time Complexity: O(1) They don’t change their run-time in response to the input data, which makes them the fastest algorithms out there.

What is the big O notation?

Big O notation is a mathematical notation that describes the limiting behavior of a function when the argument tends towards a particular value or infinity. … In computer science, big O notation is used to classify algorithms according to how their run time or space requirements grow as the input size grows.

Which sort is fastest?

If you’ve observed, the time complexity of Quicksort is O(n logn) in the best and average case scenarios and O(n^2) in the worst case. But since it has the upper hand in the average cases for most inputs, Quicksort is generally considered the “fastest” sorting algorithm.