The standard deviation is the square root of the variance, and it is a useful measure of variability when the distribution is normal or approximately normal (see below on the normality of distributions).

What is the difference between standard deviation and variability?

Standard deviation looks at how spread out a group of numbers is from the mean, by looking at the square root of the variance. The variance measures the average degree to which each point differs from the mean—the average of all data points.

How do you calculate variability?

- Find the mean of the data set. …

- Subtract the mean from each value in the data set. …

- Now square each of the values so that you now have all positive values. …

- Finally, divide the sum of the squares by the total number of values in the set to find the variance.

Does variability mean standard deviation?

The standard deviation is the average amount of variability in your dataset. It tells you, on average, how far each score lies from the mean. The larger the standard deviation, the more variable the data set is.What is the difference between deviation and variation?

Variation is a magnetic interference common to all vessels. Deviation is a magnetic interference unique to the vessel itself.

What means variability?

Variability, almost by definition, is the extent to which data points in a statistical distribution or data set diverge—vary—from the average value, as well as the extent to which these data points differ from each other.

What is meant by variability?

Variability refers to how spread scores are in a distribution out; that is, it refers to the amount of spread of the scores around the mean. For example, distributions with the same mean can have different amounts of variability or dispersion.

What is an example of variability?

A simple measure of variability is the range, the difference between the highest and lowest scores in a set. For the example given above, the range of Drug A is 40 (100-60) and Drug B is 10 (85-75). This shows that Drug A scores are dispersed over a larger range than Drug B.Is variance and variability the same thing?

Variability means “lack of consistency”, and it measures how much the data varies. … Variance is the average squared deviation of a random variable from its mean. Variance of X is defined as Var(X)=E[(X−μ)2] .

Is variance a measure of variability?In statistics, variance measures variability from the average or mean. It is calculated by taking the differences between each number in the data set and the mean, then squaring the differences to make them positive, and finally dividing the sum of the squares by the number of values in the data set.

Article first time published onHow do I calculate standard deviation?

- Work out the Mean (the simple average of the numbers)

- Then for each number: subtract the Mean and square the result.

- Then work out the mean of those squared differences.

- Take the square root of that and we are done!

How do you calculate variance and standard deviation?

To calculate the variance, you first subtract the mean from each number and then square the results to find the squared differences. You then find the average of those squared differences. The result is the variance. The standard deviation is a measure of how spread out the numbers in a distribution are.

Why is standard deviation The best measure of variability?

The standard deviation is the standard or typical difference between each data point and the mean. … Conveniently, the standard deviation uses the original units of the data, which makes interpretation easier. Consequently, the standard deviation is the most widely used measure of variability.

Why is standard deviation used instead of variance?

Standard deviation and variance are closely related descriptive statistics, though standard deviation is more commonly used because it is more intuitive with respect to units of measurement; variance is reported in the squared values of units of measurement, whereas standard deviation is reported in the same units as …

What is another term for variability?

capriciousnesschangeabilityinconstancyvolatilityfluctuationunsteadinessunreliabilitychangeablenessvacillationmutability

What is variability in breeding?

Genetic variability is either the presence of, or the generation of, genetic differences. … Genetic variability in a population is important for biodiversity.

How does Standard Deviation summarize variability?

Standard deviation shows how much variation (dispersion, spread, scatter) from the mean exists. … It is a popular measure of variability because it returns to the original units of measure of the data set. A low standard deviation indicates that the data points tend to be very close to the mean.

What does standard deviation measure?

A standard deviation (or σ) is a measure of how dispersed the data is in relation to the mean. Low standard deviation means data are clustered around the mean, and high standard deviation indicates data are more spread out.

What are the units of variance and standard deviation?

Variance is the average squared deviations from the mean, while standard deviation is the square root of this number. Both measures reflect variability in a distribution, but their units differ: Standard deviation is expressed in the same units as the original values (e.g., minutes or meters).

How do you convert units into standard deviation?

SD = 6 inches So to switch from inches to feet you have to multiply your mean by 1/12 or divide 72 by 12, both have the same outcome of 6. Since your new unit is feet, your mean will be 6 feet. In order to change your standard deviation you just multiply/divide it by the constant that you used on your mean earlier.

What is standard deviation and example?

The standard deviation measures the spread of the data about the mean value. For example, the mean of the following two is the same: 15, 15, 15, 14, 16 and 2, 7, 14, 22, 30. … However, the second is clearly more spread out. If a set has a low standard deviation, the values are not spread out too much.

What is standard deviation formula with example?

Standard deviation formula example: Subtracting the mean from each number, you get (1 – 4) = –3, (3 – 4) = –1, (5 – 4) = +1, and (7 – 4) = +3. Squaring each of these results, you get 9, 1, 1, and 9. Adding these up, the sum is 20. … The standard deviation for these four quiz scores is 2.58 points.

Why do you need a measure of variability?

Why do you need to know about measures of variability? You need to be able to understand how the degree to which data values are spread out in a distribution can be assessed using simple measures to best represent the variability in the data.

How do you find the variance and standard deviation of ungrouped data?

The procedure for calculating the variance and standard deviation for ungrouped data is as follows. First sum up all the values of the variable X, divide this by n and obtain the mean, that is, ¯X = ΣX/n. Next subtract each individual value of X from the mean to obtain the differences about the mean.

How do you find the variance and standard deviation of a probability distribution?

To find the variance σ2 of a discrete probability distribution, find each deviation from its expected value, square it, multiply it by its probability, and add the products. To find the standard deviation σ of a probability distribution, simply take the square root of variance σ2.

Does higher standard deviation mean more variability?

Explanation: Standard deviation measures how much your entire data set differs from the mean. The larger your standard deviation, the more spread or variation in your data. … There is greater variability in the test scores.

Which measure of variability is considered most reliable?

Standard Deviation (S. D.): One of the most stable measure of variability, it is the most important and commonly used measure of dispersion. It measures the absolute dispersion or variability of a distribution.

How do you know which measure of variability is best?

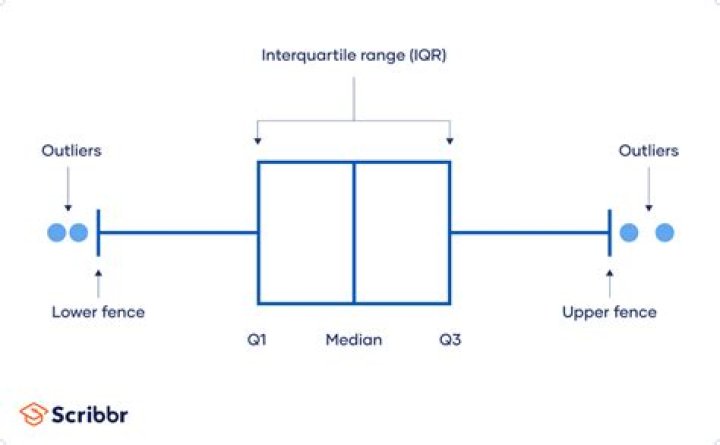

The interquartile range is the best measure of variability for skewed distributions or data sets with outliers. Because it’s based on values that come from the middle half of the distribution, it’s unlikely to be influenced by outliers.

Which is better variance or standard deviation?

Variance helps to find the distribution of data in a population from a mean, and standard deviation also helps to know the distribution of data in population, but standard deviation gives more clarity about the deviation of data from a mean.

Is standard deviation better than variance?

They each have different purposes. The SD is usually more useful to describe the variability of the data while the variance is usually much more useful mathematically. For example, the sum of uncorrelated distributions (random variables) also has a variance that is the sum of the variances of those distributions.

Is variance or standard deviation bigger?

The std deviation is the square root of the variance. It is smaller than the variance, when the variance > 1.0.